Repost from: https://martechtoday.com/5-ways-to-improve-roi-on-seasonal-pages-by-optimizing-your-seo-crawl-budget-223174

What is a crawl budget?

Google’s goal is to make useful information available to people searching the web. To accomplish that, Google wants to crawl and index content from quality sources.

Crawling the web is costly: Google uses as much energy per year as the entire city of San Francisco, just to crawl websites. In order to crawl as many useful pages as possible, bots must follow planning algorithms that prioritize which pages to crawl and when. Google’s page importanceis the idea that there are measurable ways to determine which pages to prioritize.

There’s no index of set values of crawls for each site. Instead, available crawls are distributed based on what Google thinks your server will handle and the interest it believes users will have in your pages.

Your website’s crawl budget is a way of quantifying how much Google spends to crawl it, expressed as an average number of pages per day.

Why optimize your crawl budget?

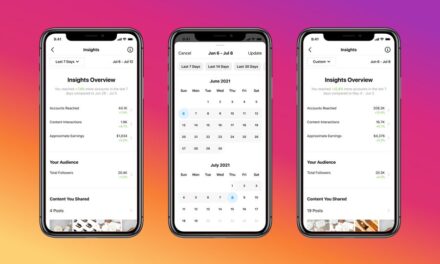

Thanks to OnCrawl’s data on hundreds of millions of pages, we’ve also learned that there is a strong correlation between how frequently Google crawls a page and the number of impressions it receives: pages that are crawled more often are seen more often in search results.

Relation between number of impressions and crawl frequency

This correlation means that you can use crawl budget optimization as a strategy to promote a group of pages in search results. If your website has seasonal pages, these pages can be excellent candidates for promotional campaigns based on optimized crawl frequency.

To bring these pages to the forefront in search results, you’ll need to promote them to Google above other types of pages in your website during the appropriate seasonal period.

Using crawl budget optimization strategies, you can draw Google’s attention to certain pages and away from others in order to increase impressions on pages subject to seasonality on your website.

You’ll want to:

- Optimize your general crawl budget.

- Reduce the depth of important season pages using “collections” linked to from category home pages in your site structure.

- Increase the internal popularity of important pages by creating backlinks from related pages.

Relation between the number of internal “follow” links and crawl frequency

#1 Monitor your crawl budget

Google Search Console will provide composite crawl stat values for visits from all Google bots. In addition to the official 12 bots, at OnCrawl we’ve noticed a new bot emerging: Google AMP bot. This data includes all URLs — including JavaScript, CSS, font and image URLs — for all bot hits. Because of differences in bot behavior, the values given are averages. For example, since AdSense and mobile bots must fully render each page, unlike the desktop Googlebot, the page load time provided is an average between the full and partial load times.

This isn’t precise enough for SEO analyses.

Therefore, the most reliable way to measure your site’s crawl budget is to inspect your site’s server logs regularly. If you’re not familiar with server logs, the principal is straightforward: web servers record every activity. These logs are usually used to diagnose site performance issues.

One activity logged is the request for a URL. In the log, lines for this type of activity will include information about the IP address making the request, the URL, the date and time and the result in the form of a status code.

Here’s an example:

www.mywebsite.com:443 66.249.73.156 [15/Aug/2018:00:02:59 +0000] “GET /news/my-article-URL HTTP/1.1” 200 44506 “Mozilla/5.0 (compatible; Googlebot/2.1; +http://www.google.com/bot.html)”

By identifying all of the requests from search Google bots, you can accurately measure the number of Google bot hits in a given period of time. This is your crawl budget.

This number can’t tell you if Google’s giving your site enough attention. SEO crawlers with log monitoring capabilities, like OnCrawl, provide additional metrics to diagnose the health of your crawl budget.

Because your crawl budget is what allows new and updated pages to be indexed, it’s essential to address problems and sudden changes quickly.

#2 Fix server issues

If your site is too slow or your server returns too many timeouts or server errors, Google will conclude that your website cannot support a higher demand for its pages.

You can correct a perceived server problem by fixing 400- and 500-level status codes and by modifying server-related factors for page speed.

Because logs indicate both status codes returned and number of bytes downloaded, log monitoring is key to diagnosing and correcting server issues.

If your site is hosted on a shared server, you can still improve server performance through caching, CDNs, appropriately sized images, updating your PHP version, and using lazy or asynchronous loading techniques for resources.

#3 Waste not, want not

Keep Google focused on pages you want to rank and away from the bowels of your site. Often, your crawl budget isn’t used to discover new or updated pages because it’s spent on other things.

Your log monitoring data will provide a picture of what Google crawls — and what it never discovers — on your site.

Integrating log data with data from an SEO crawler will help you answer the following questions:

- Are there pages being crawled despite being non-indexable? (Are they in the sitemap?)

- Are there pages being crawled that don’t return a 200 status code?

- Is Google crawling URLs for images, PDFs and other media?

- Is Google crawling pages you have no user hits for?

- Is Google crawling lots of redirected pages?

If you can answer “yes” to any of these questions, you can free up crawl budget by directing bots not to crawl these resources. Prioritize the topics consuming the most budget.

Additionally, OnCrawl’s analyses can reveal relations between:

- Depth of pages in your site structure and page crawl frequency.

- Status codes and page crawl frequency.

- Popularity of pages by number of hits and page crawl frequency.

- Internal linking structure and page crawl frequency.

If you’re promoting seasonal pages, this is where you can make the most difference. These relations indicate the best types of content and structure in your site. Modify the linking structure of seasonal pages accordingly, and place these pages in optimal site depths, ahead of other pages.

Finally, log monitoring and site crawl data will bring to light any orphan pages — ages not linked to in your site’s structure — that are crawled by Google. If these pages receive visits from Google, reconnect them to your site structure to take advantage of this traffic. Otherwise, take them down or disallow robots.

#4 Optimize for Googlebot

Humans can do all sorts of things that bots can’t — and shouldn’t. For example, bots should be able to access your signup page, but they shouldn’t try to sign up or sign in. Bots don’t fill out contact forms, reply to comments, leave reviews, sign up for newsletters, add items to a shopping cart or view their shopping basket.

Unless you tell them not to, however, they’ll still attempt to follow these links. Make good use of nofollow links and restrictions in your robots.txt file to keep bots away from actions they can’t complete. You can also choose to move certain parameters related to a user’s options or to view a cookie or to restrict infinite spaces in calendars and archives. This frees up crawl budget to spend on pages that matter.

#5 Improve content quality

Official statements from Google, whether by representatives or on the webmaster support pages, indicate that your crawl budget is strongly influenced by the quality of your content.

Evidence from combining log data and semantic analysis by OnCrawl supports this fact. We’ve found most sites show a relationship between:

- Number of words and crawl behavior.

- Duplicate content and crawl behavior.

- Internal PageRank and crawl behavior.

You should also leverage the advantage of quality content to reinforce weaker pages through the use of:

- External backlinks.

- Internal linking structures.

- Canonical optimization.

If you’re promoting seasonal pages, concentrate on optimizing them first. Reports from site audits and site crawls indicate which pages in these groups would profit most from improvement.

Your healthy crawl budget

A healthy crawl budget is the key to improving ROI on SEO efforts by ensuring that Google sees the pages you’ve optimized.

Once you’ve made improvements, continue monitoring the crawl budget of your site. This allows you to measure the results and be ready to react to changes.